Teaching a Recurrent Neural Network (RNN) to generate manifold objects for 3D Printing.

Sept 15, 2016 by Jeremy Ellis Twitter: @rocksetta

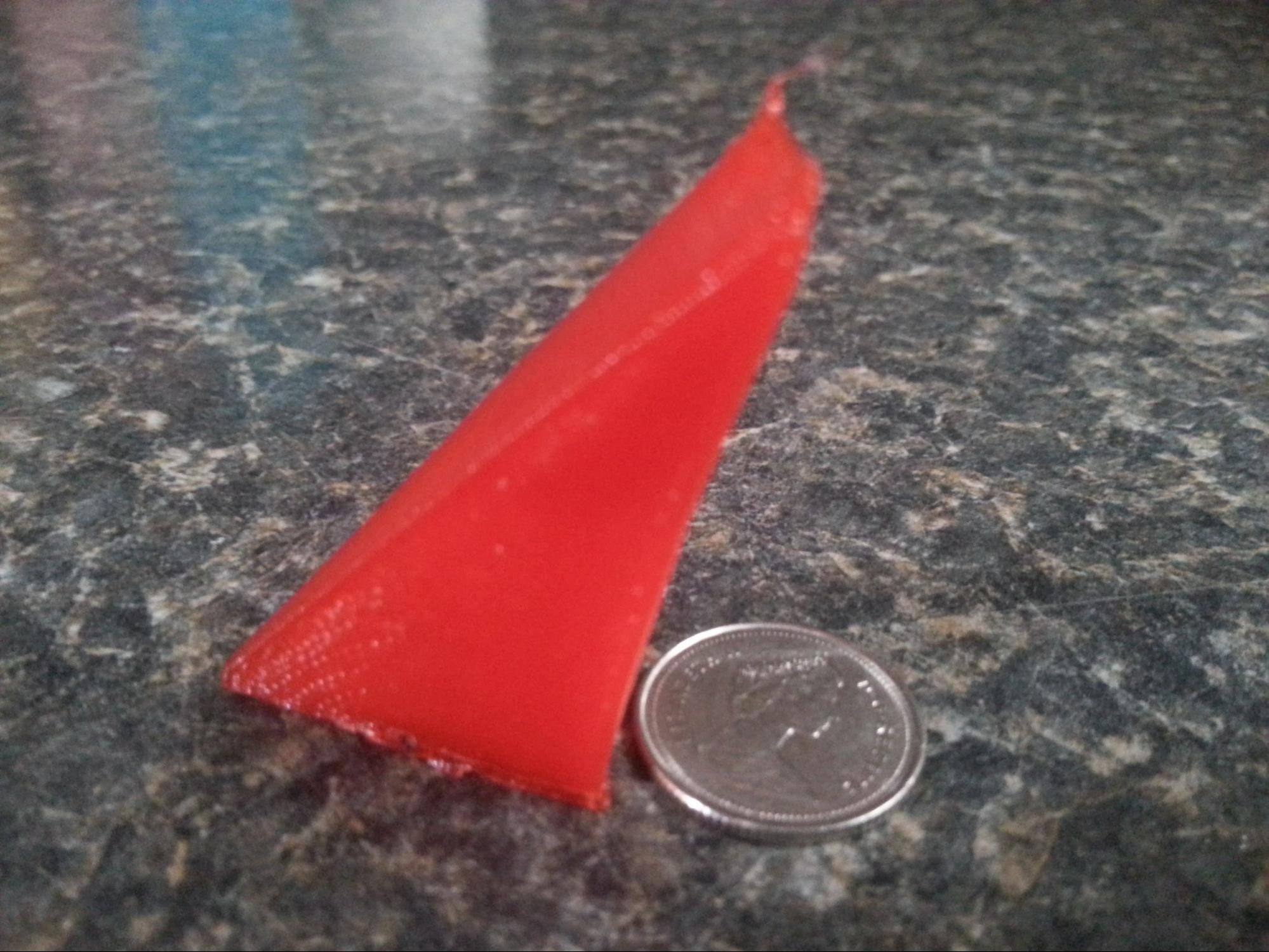

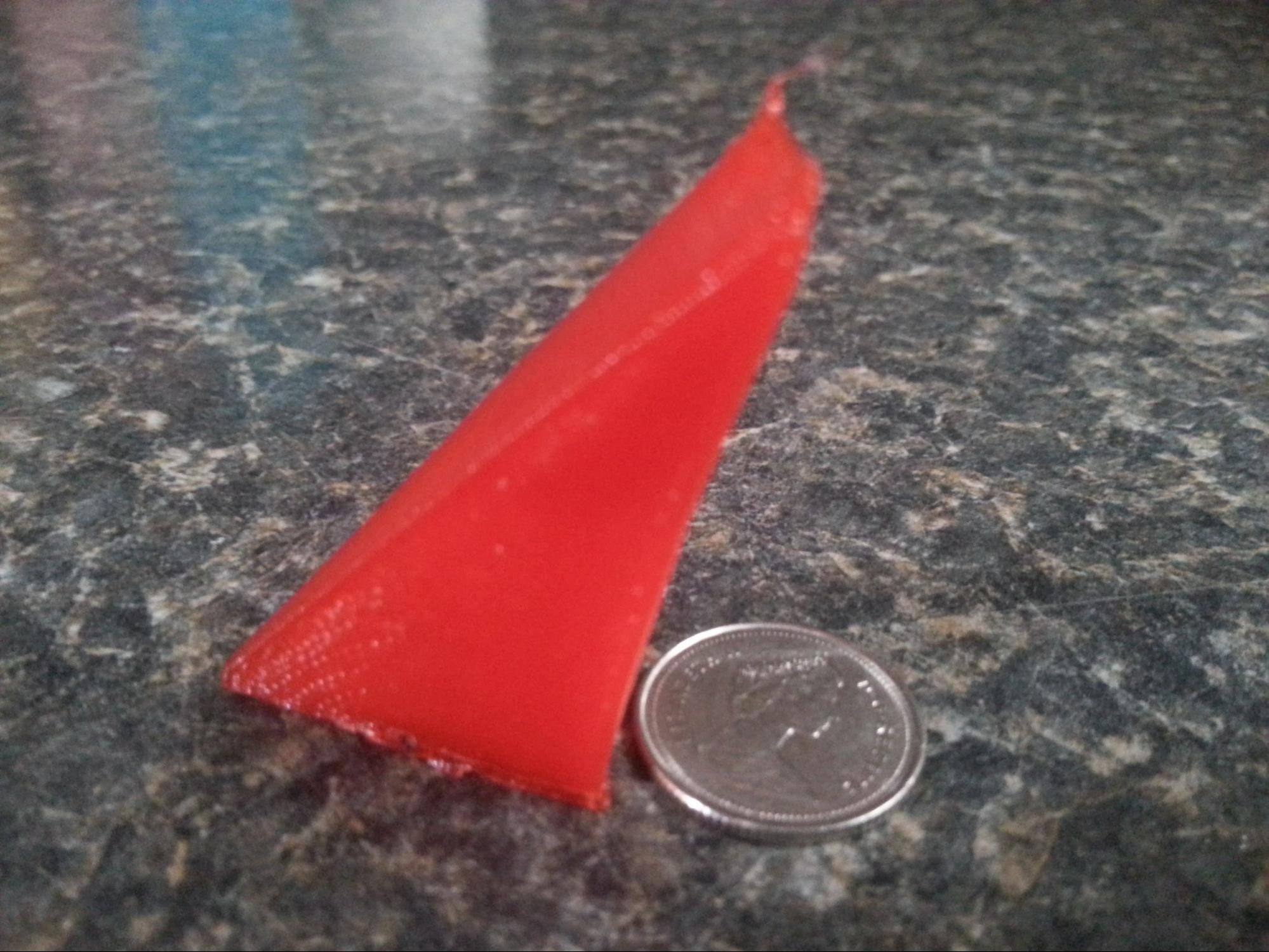

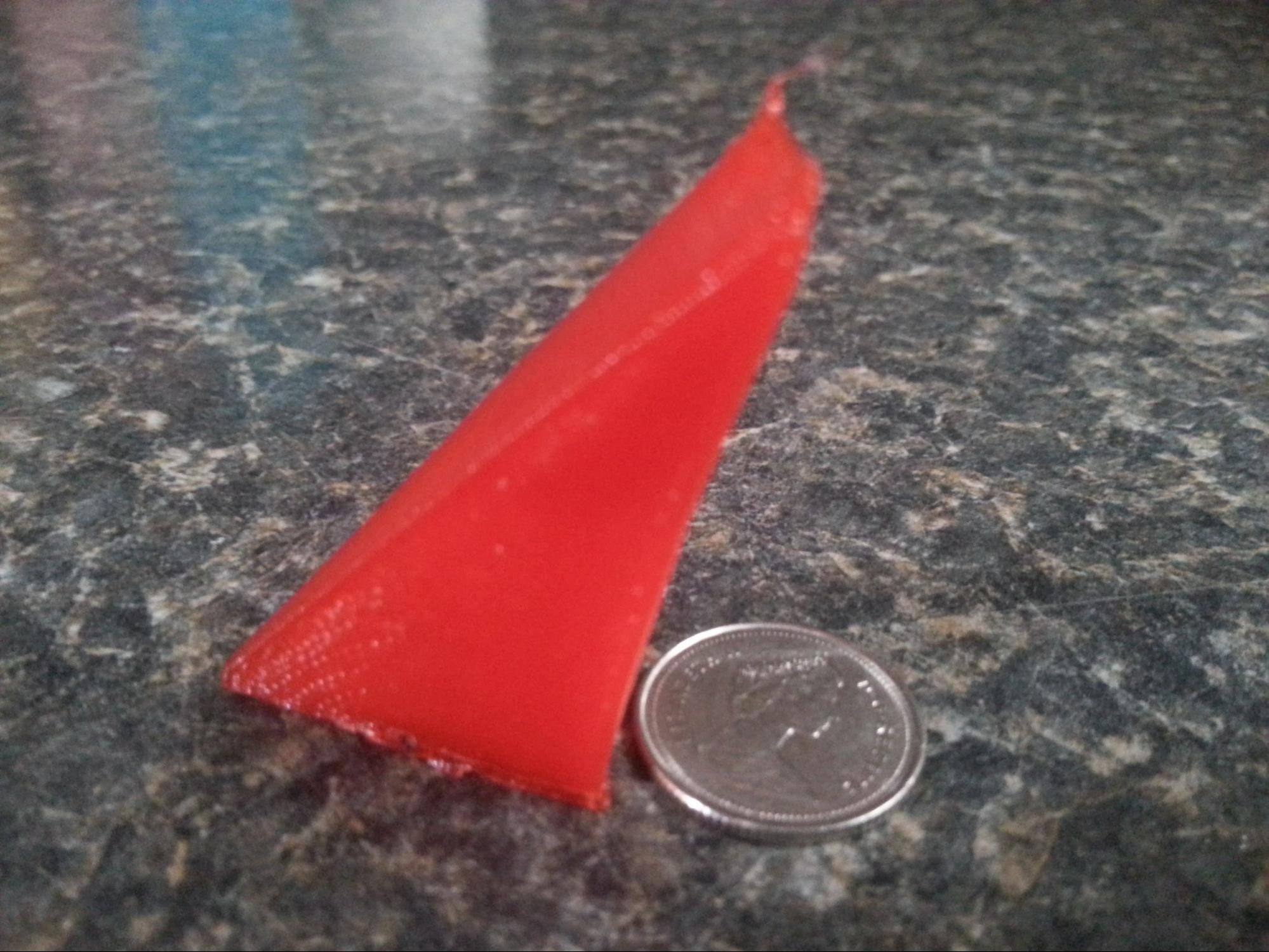

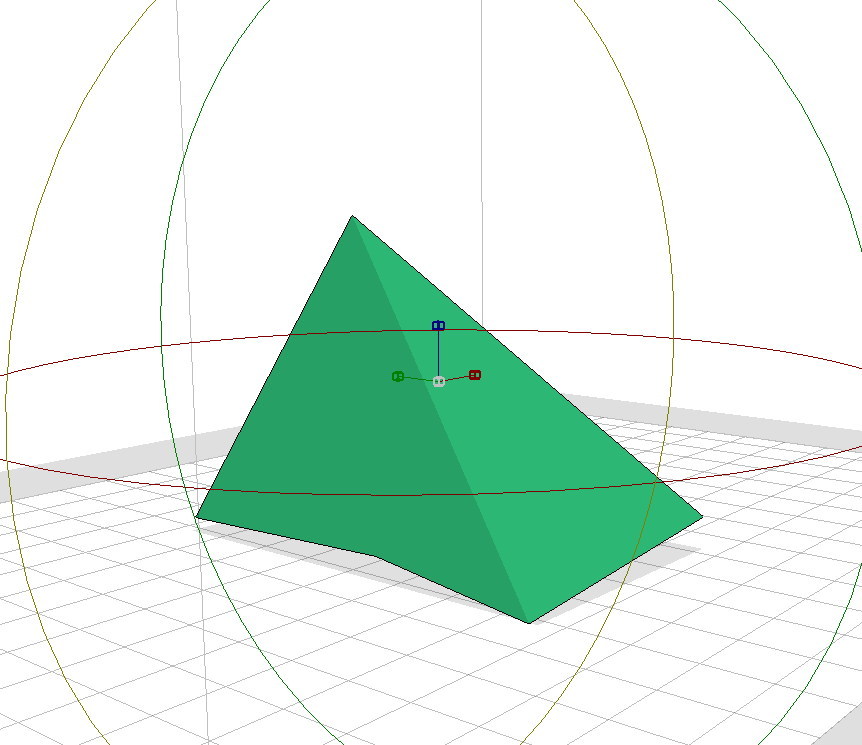

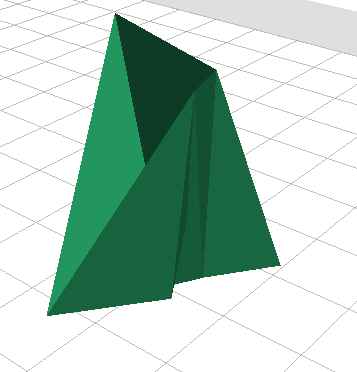

First simple print looks like

Which is not very impressive unless you understand that the generated code was completely manifold which means sealed for 3D printing or watertight.

So how did I do it?

Using the github site at

https://github.com/sherjilozair/char-rnn-tensorflow

Which converts the Karpathy RNN to Tensorflow and makes a deep learning machine that generates Shakespeare prose from a text file inthe folder data/tinyshakespeare

Which for me produced this wonderful batch of nonsense writing such as

Maraoz adaptation was to generate music using the ABC music notation.

Here is Maraoz very well written blog about what he did

https://maraoz.com/2016/02/02/abc-rnn/

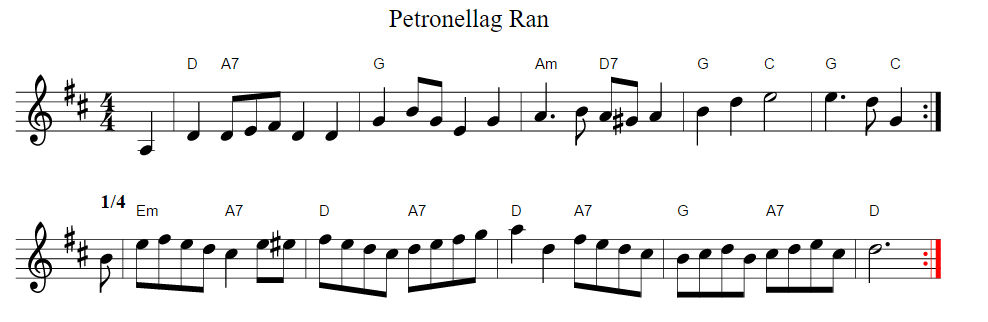

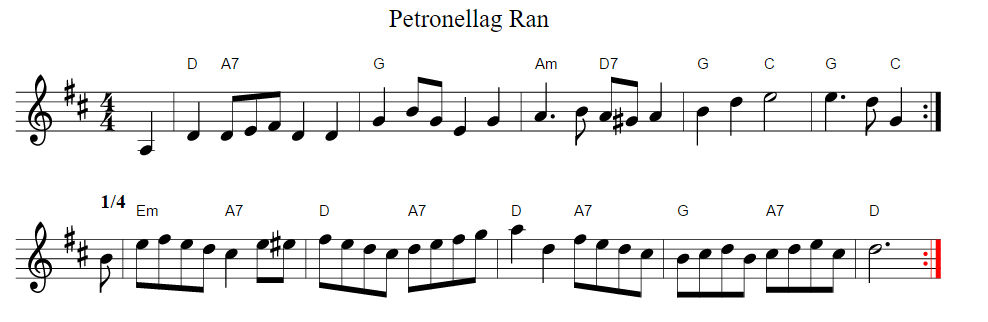

When I repeated what Maroz did with ABC music I got this output

Which can be converted into this sheet music at my site at rocksetta.com which is not the most userfriendly but works.

Click here to hear the song Petronellag Ran https://clyp.it/svqldqdu

So a text based RNN can generate learnt text as long as it gets a sensible amount of example text about a topic!

I have spent a few months trying to get Google Tensorflow Magenta music generation program working as you can see from my github site at https://github.com/hpssjellis/google-magenta-midi-music-on-linux-hello-world ,

so when I came upon this simple approach I was very impressed. It can take lots of files in plain text, get a sense of what the text does and generate it’s own plain text. Actually it keeps generating a continuous stream of as much plain text as you like! (I generate lots of text and then cut and paste chunks that have a sensible starting and ending point.)

I teach 3D Printing, Robotics and computer programming. If this RNN can take text and generate text could it perhaps take 3D Objects and generate 3D objects?

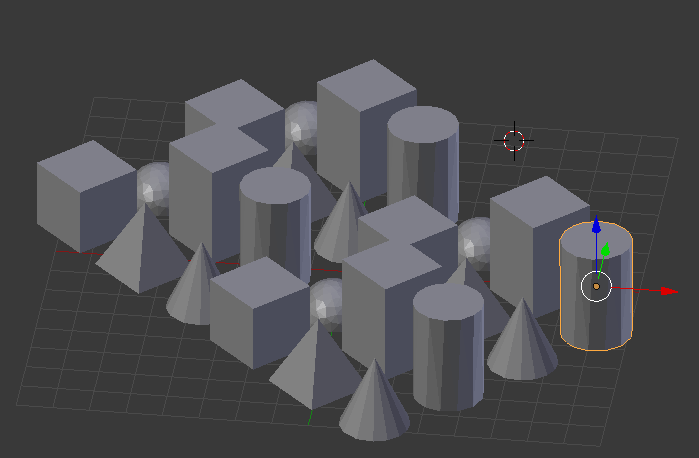

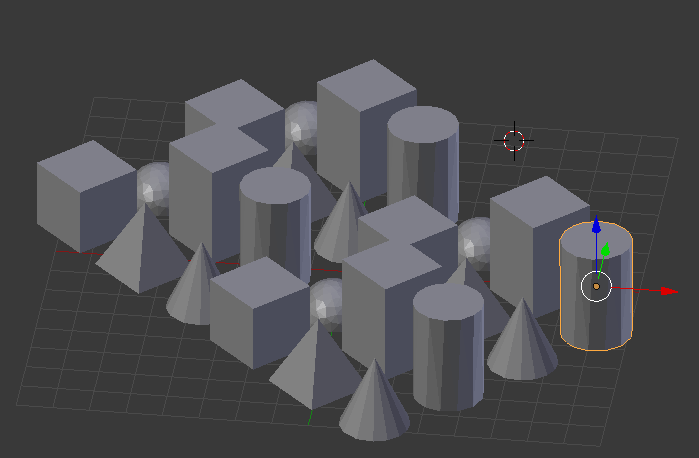

I then tried training my RNN using 3D .obj files which are text based representations of 3D objects that can be converted into .stl files ready for 3D printing as g-code files.

I found basic shapes and set them to sizes between 1 and 2 mm widths.

Then I rotated them into various positions and exported each individual shape as .OBJ files. The same shape in a different location will generate a different text .obj file.

Then concatenated the entire set of files using the DOS command

I then trained the RNN using that input.txt file.

At about a 100,000 training loops, which took about 8 hours with a 6Gb ram ubuntu 15.04 laptop with Tensorflow 10.0 and only CPU support.

The RNN was generating simple manifold shapes 1/3 of the time. The other 2/3 was a mess, that I could not even load into blender, using the import .obj ability.

The final text files were loaded into blender.exe checked if they were manifold and exported as .stl files, which are then converted to g-code specific to the individual 3D Printer.

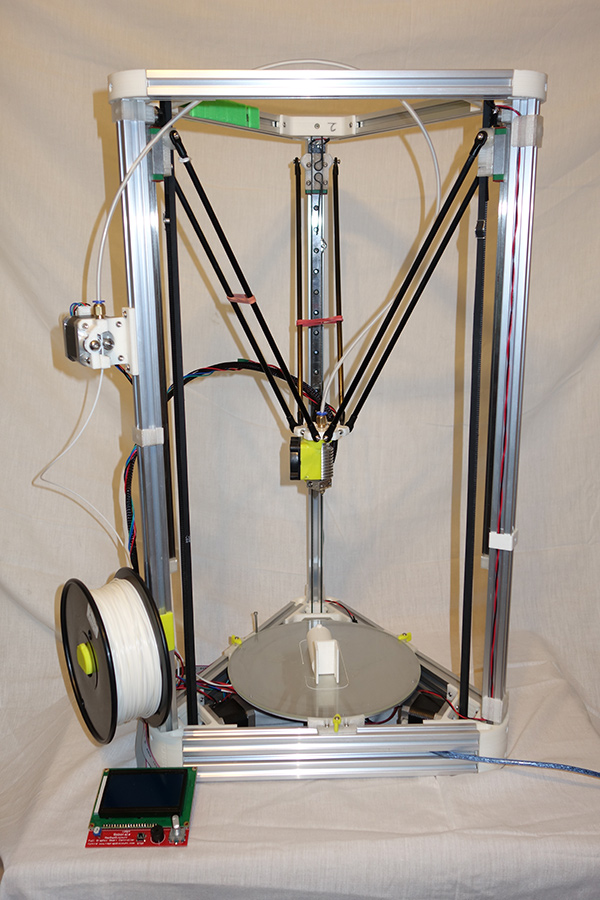

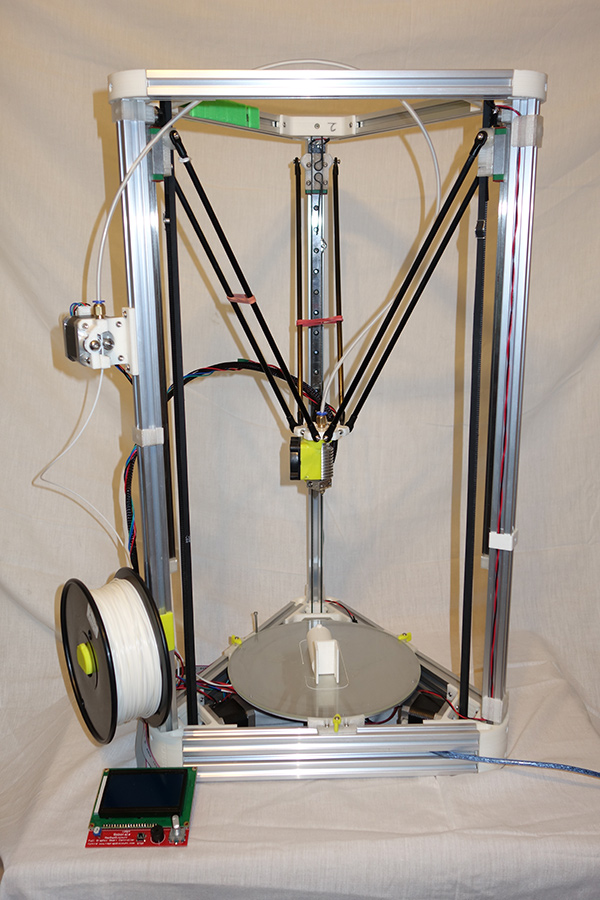

I have a home made Kozzel printer

http://rocksetta.com/3d-print/

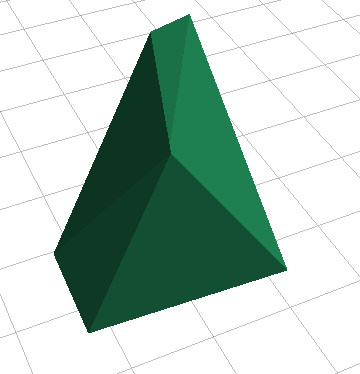

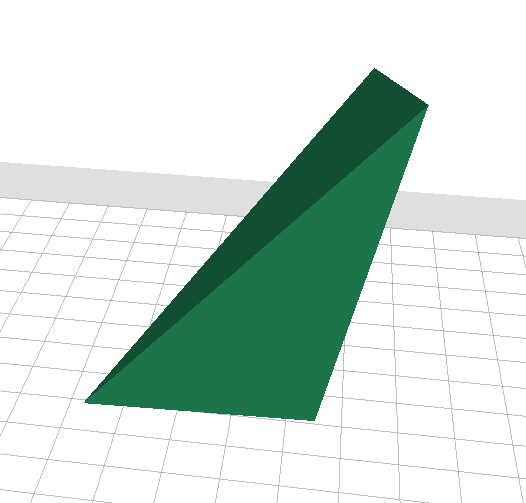

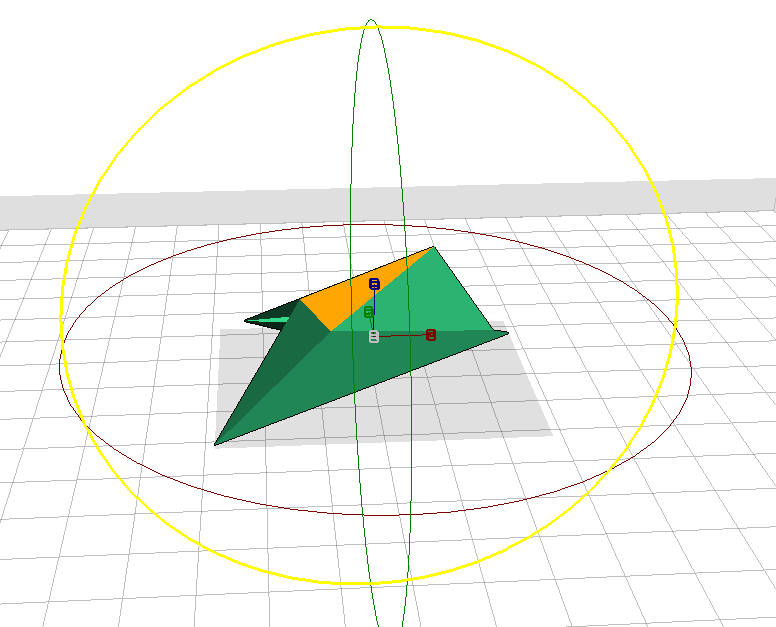

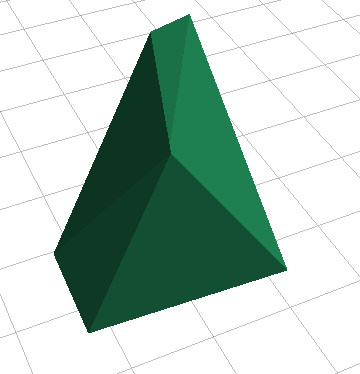

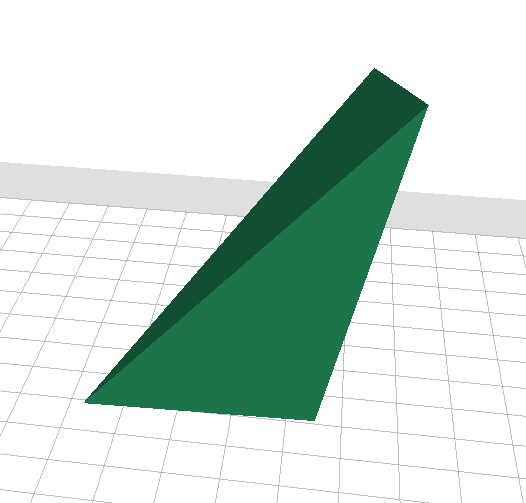

Here is the first output .obj file that I created.

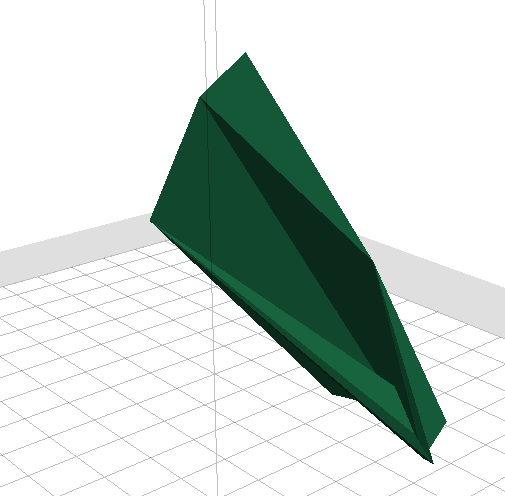

Here is the .stl view (Note: I did scale up the images because the trained objects were 1mm and 2mm scale to make the object text files very small. Once the stl image is generated it is a simple matter to scale it 1000% to make it easier to print.)

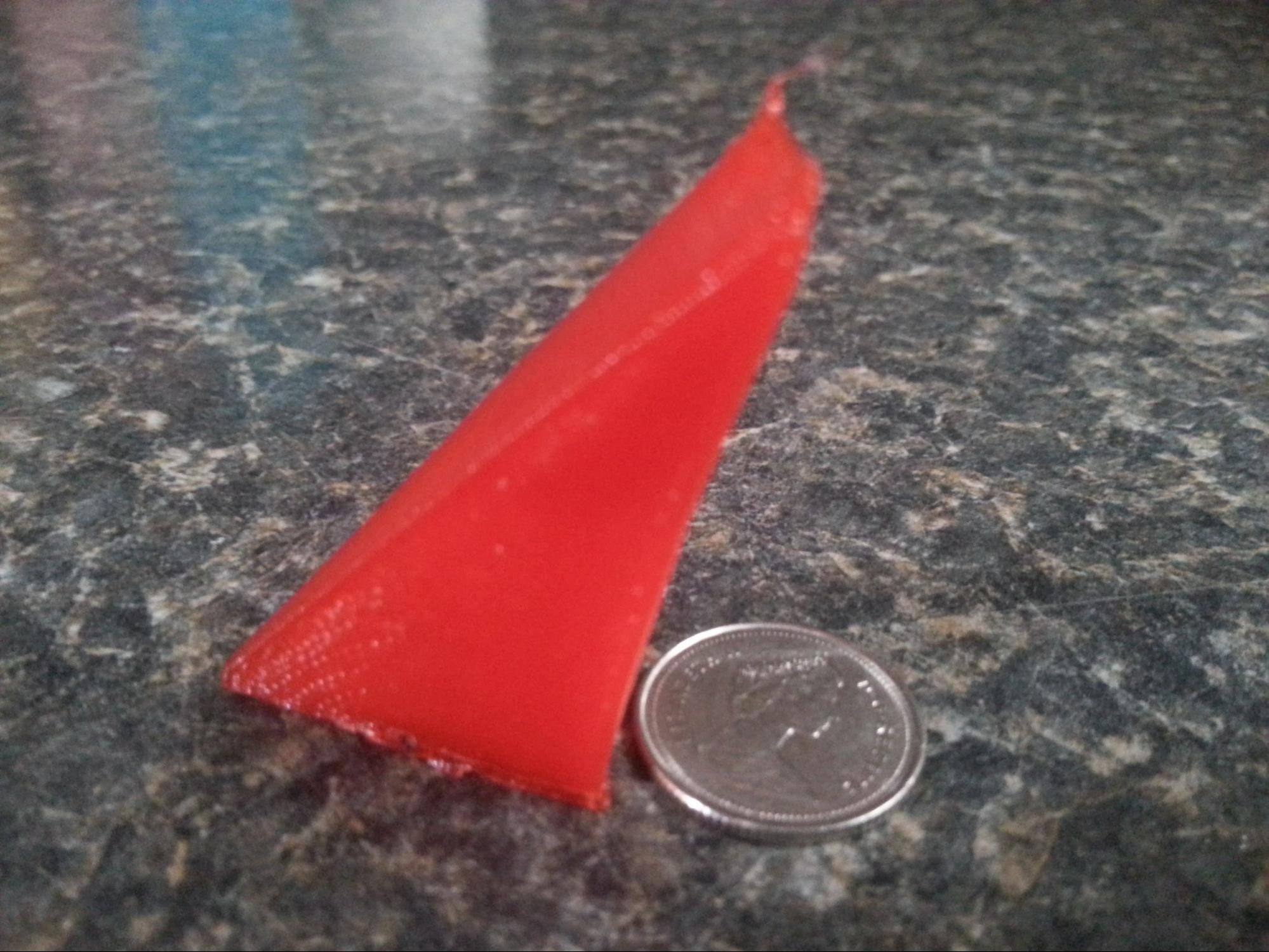

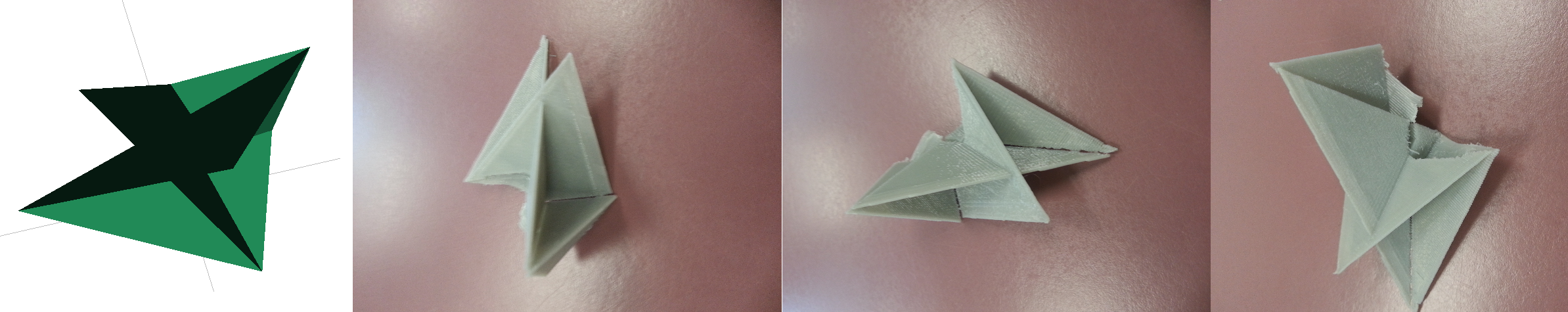

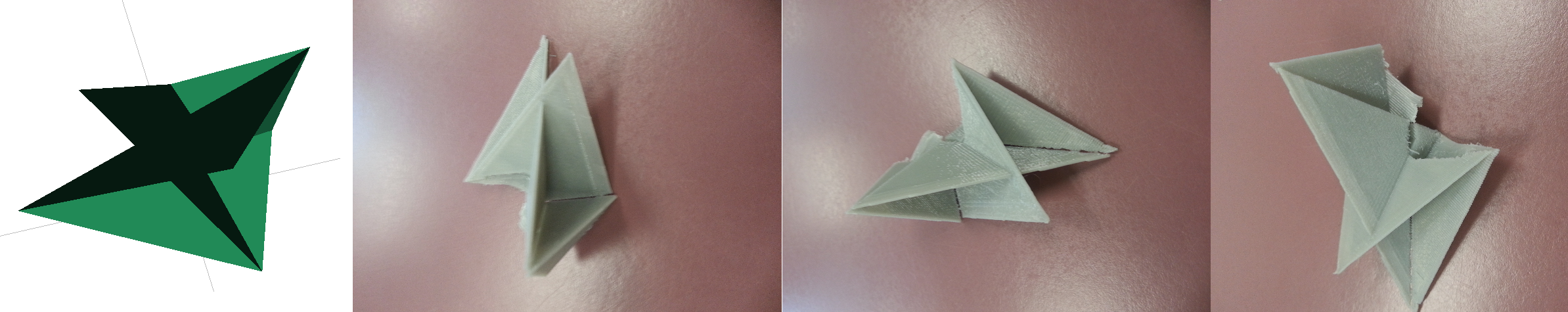

Here is the printed product again

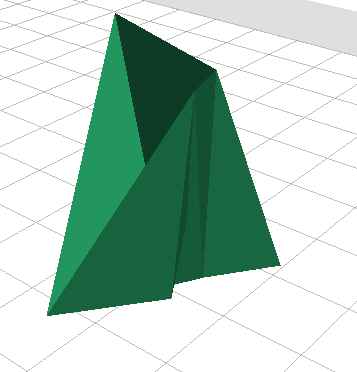

Here are other builds:

Strange as I get farther along with training my RNN the prints are harder to get manifold. at about 300,000 training loops I am getting 1/6 of the text output from the RNN manifold, but it is probably because they are more complex.

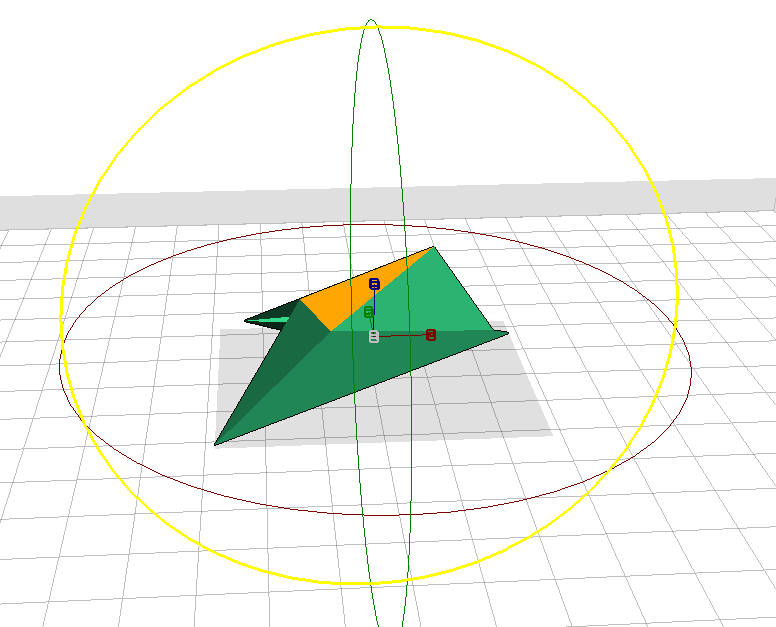

At 300,000 training loops I got this print

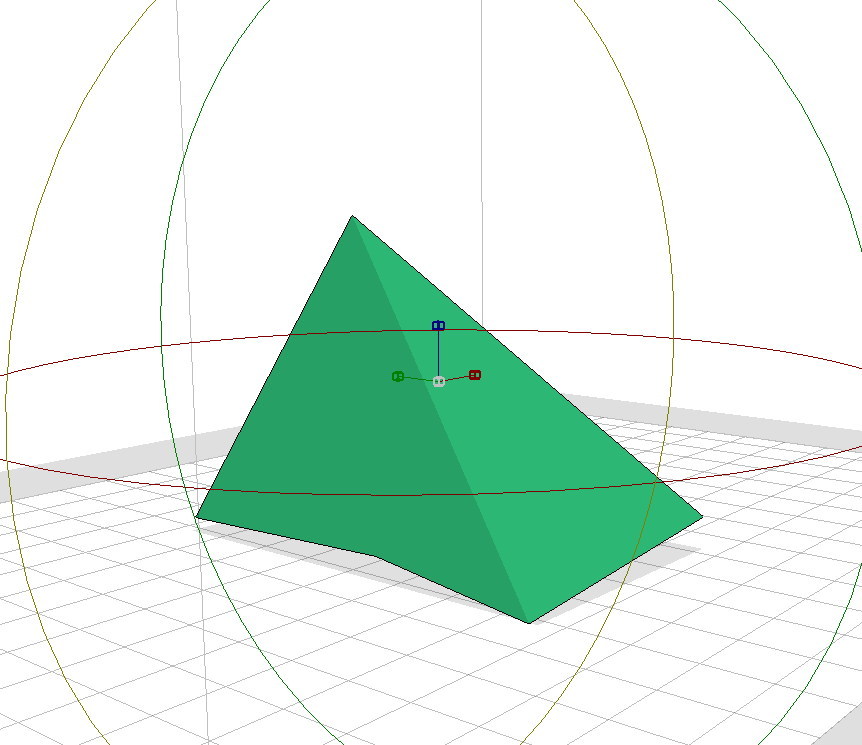

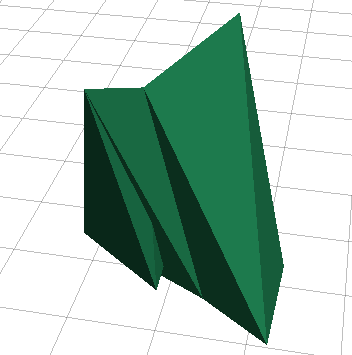

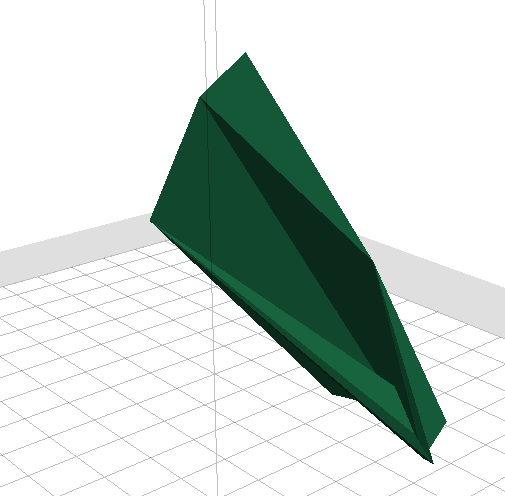

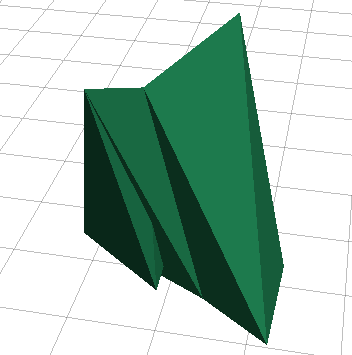

At 500,000 training loops I got these .stl files. All three images are of the same object from different angles

<

<

<

<